Did you know? R Language Is Also Important to Be Mastered by Non-ITs

March 22, 2022

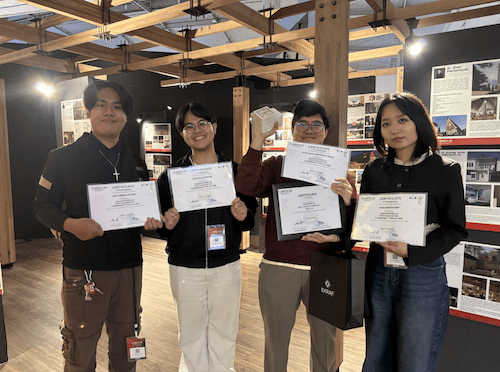

Congratulations! Candy Monster from UMN Pictures Receives Another Award

March 22, 2022

Tetris Program (dok.DQLab)

TANGERANG – On Monday (07/03/22), DQLab virtually conducted the 3rd session of the Tetris Program, discussing how to use Pentaho Data Integration (PDI) to extract data from text and excel files. This is also a follow-up session from the previous PDI session, discussing using PDI as an ETL tool. The same speaker from the last PDI session, Iqbal Hanif, a Big Data Analytics at Telkom Indonesia, returned to present the materials regarding the topic.

Talking about extraction, Iqbal opened the session by briefly bringing materials from the previous session related to this session’s materials. “In simple words, extracting is a process of collecting data from several sources. The sources include database, file, tools or applications, APIs, websites, sensors, etc.”

Extracting data on PDI can be done by using the steps in the “Input” folder. Steps often used are table input, Cvs file input, Microsoft excel file input, Text file input, JSON input and Data grid. In addition, to extract data, you can use other steps such as an HTTP client.

In this session, Iqbal showed the participants how to extract from tabular data, which is a structured data from excel and MySQL using the file from the previous PDI session. He shared that the steps needed are the Microsoft Excel input and the Table input.

“How to read files from Excel is something that SQL doesn’t accommodate. So if I would get data from Excel, the fastest way is to use Pentaho,” Iqbal said.

Iqbal also shared how to extract data from MySQL. Firstly, make sure that the database connection to the localhost in the PDI is available. If unavailable, you can do the steps taught in the previous session. Secondly, add a “Table Input” step to the canvas, then right-click and edit. Select “Connection” according to the connection’s name made to localhost, and write the syntax/query to retrieve data in the ms_branch table.

Also read Let’s Get to Know R, One of the Tools in Data Science

“At this stage, basic SQL must also be understood and practiced continuously to hone SQL skills,” Iqbal said.

In addition, Iqbal also gave directions and showed how to extract unstructured data. The goal is to extract text data from websites (HTML). The steps needed are Data grid and HTTP client. At this stage, Iqbal extracted the HTML code (scraping) from online news articles to get the text of the news. Iqbal noted that people need to make sure all the texts from the articles are readable on one page. It can be done by adding “?page=all” then at the “data-grip” step, make sure there are no empty numbered rows and pay attention to the “#” column, because this can trigger an error.

At the end of the session, Iqbal said that, according to him, SQL is the programming language most often used by people with various backgrounds. Iqbal hopes with Pentah; participants can like coding as an alternative.

“Unlike Python, SQL is universal,” Iqbal said.

As a programming language often used, SQL is an alternative for beginners when learning to extract data. Mastering the basics of SQL is very important. Let’s learn with DQLab.id to become an amazing data talent.

by Lathifa Lisa – DQLab

Kuliah di Jakarta untuk jurusan program studi Informatika| Sistem Informasi | Teknik Komputer | Teknik Elektro | Teknik Fisika | Akuntansi | Manajemen| Komunikasi Strategis | Jurnalistik | Desain Komunikasi Visual | Film dan Animasi | Arsitektur | D3 Perhotelan | International Program, di Universitas Multimedia Nusantara. www.umn.ac.id